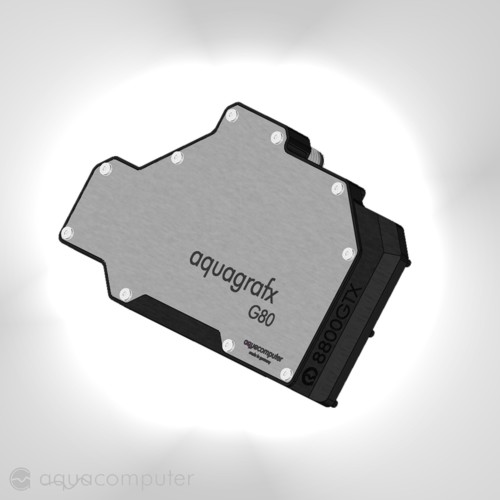

View on the G80 architectureCompared to their previous generation graphics boards we see that clock speed didn't dramatically increase, though performance wise the 8800 is untouchable for Geforce 7900 cards. The key for its higher performance lies within their very new 681 million transistor counting GPU architecture which is based on a huge bunch off programmable units, or as NVIDIA like to call them: 'stream processors'.

What exactly are those thingies?

Well, for starters you have to know that every image on your screen is code being generated from your game/OS software and with the help of DirectX and VGA drivers being translated into logical data. From this data a picture is being build up (vertex shader), painted over (pixel shader) and then rasterized on your screen (see above picture). With the previous G70 GPU we saw that for each operation a different part in the gpu was preserved for doing vertex or pixel calculations only, for example one could have 24 vertex shading processors. The amount of shader and pixel processors, and their rated clock speed explained how well the GPU would work in a certain 3D scene.

Now, in some cases this might work out very well, but because of the default amount of shaders it could happen that some parts inside the GPU are idling although the GPU is being heavy loaded. For example, with 24 pixel shaders and 8 vertex shader the GPU wouldn't function that great if we come upon a scene where massive pixel calculations are required. The 8 pixel shaders would be giving their very best while the vertex shader could be stressed for only 20%. To solve this problem NVIDIA came up with programmable shaders in their newest GPU architecture design. Those shaders could be vertex shaders, but they could be pixel shaders as well! It's the software that defines how your GPU is build-up internally; giving a healthy performance boost compared to past generations GPU's.

An easy way to understand the G80 architecture is by looking at the following picture:

Unified shader processors are able to do any kind of shader calculation, vertex, pixel, geometry... it doesn't matter as it is all programmable through software. This block is called the shader domain. Besides that we found a second important part inside the G80. This part is able of doing blending operations, add AA effects ... and is called the ROP (raster operation) domain. In previous generation GPU's we found that the GPU has only one clockspeed, the GPU core clock, and this clock was the same for either the shader/pixel pipes and ROP's. With the G80 it seems that NVIDIA has chosen to step aside from this as the stream processors got a clock speed of their own instead of linking it directly to the GPU core clock. So, compared to older VGA cards we have now 3 clock speeds to determine, 3 domains to overclock:

GPU core/ROP domain,

GPU core/shader domain and

GDDR3 DRAM domain.

G80 overclocking explainedRemember this picture from previous page?

Realtime clock speeds are a bit off from what they should be. The answer to this abnormality lies in how those cards are build up. G80 boards are based on a 27MHz crystal, and with the help of multipliers/dividers we get the reference 513MHz core clock. Though it seems that Geforce 8800 cards come with a limit amount of multiplier/dividers as

the clock speed only tend to increase in steps of 9/18/27MHz instead of the classic way where we overclock our card per MHz. Here are the available clock speed per domain:

We recommend to always log your clock speeds when overclocking your Geforce 8800 because there are more oddity's to reveal. If you wanted to overclock your GPU clock to, let's say 621MHz, you would have slide your GPU clock in Rivatuner anywhere between 620MHz and 648MHz looking at the chart above. This makes sense, though in real-life the 'overclock zones' are defined a bit different, actually anywhere from 617MHz to 634MHz would set the GPU core to 621MHz. We didn't found the reason why the overclock zones are defined different then one would've thought, but the fact is it does and that is why you

always need to log you clock speed with Rivatuner because that is the only way of knowing the true clock speed.

The last oddity we would like to share with our readers is the fact there is no Windows software available which is capable of independently overclocking the shaders. This doesn't mean we cannot overclock the stream processors. Although they run at much higher speed then the ROP domain of the GPU, it seems that there is some connection between both domains. When we tried to overclock our GPU through RivaTuner we noticed that not only the ROP domain increased its clock, also the shader domain changes clock speed when the GPU clock is run at certain values. Keeping in mind the overclocking zones we talked about above, this is how the 8800 GPU overclocks:

Table provided by SF3D and t024484 from the XtremeSystems forums.Once we went through our

10-way 8800 GTS roundup we found some cards didn't match the clocking table from above. This led us to believe that the shaders clock speed can be altered through the VGA BIOS. On next page we will guide you through the 8800 VGA BIOS ->

. riva is always right

. riva is always right

, thank you for the informative post!

, thank you for the informative post!